Alertia is an in-car interface that detects when a driver's mind wanders. It uses biometric sensing and voice prompts to help you refocus — without taking control away from you.

Alertia turns the car cabin into a calm, ambient interface — no screens, more presence. A driver-monitoring layer (gaze, behavior cues, heart rate, road context) estimates attention in real-time. When drift is detected, the system intervenes: voice prompts lead the driver re-anchor to the present without alarm or overload. The intent is not to take control, but to strengthen the driver's agency.

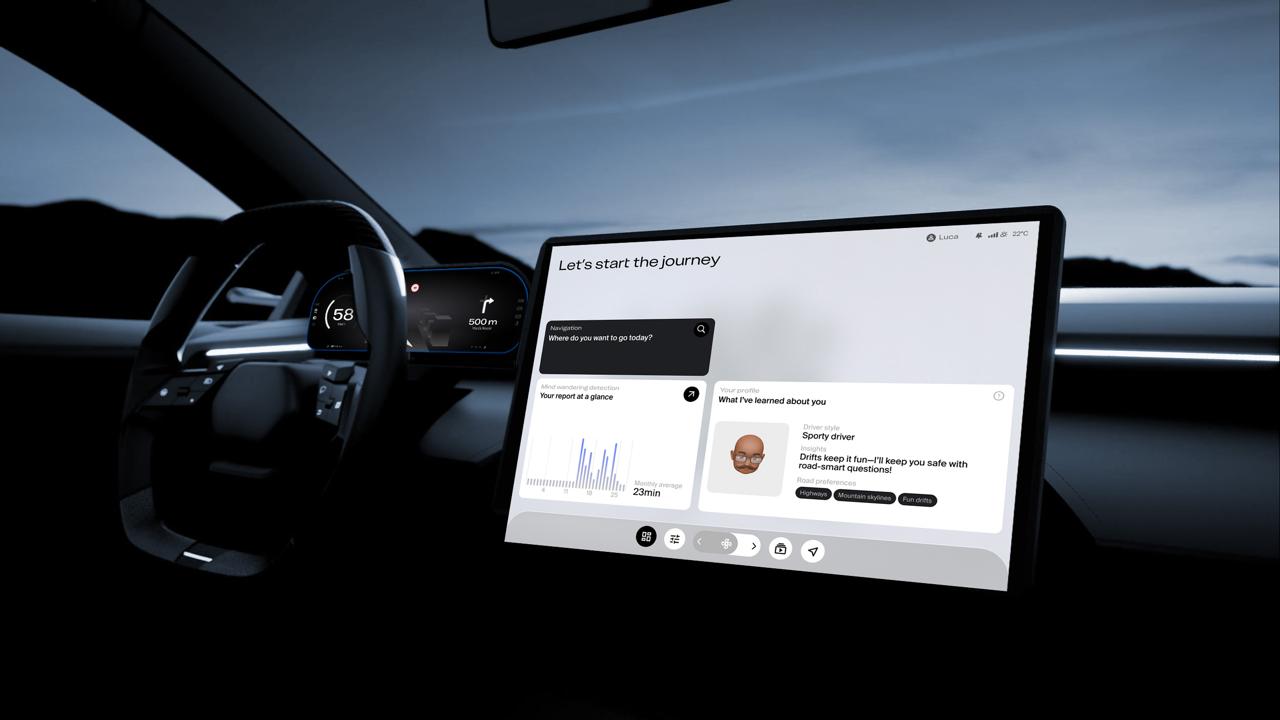

Voice user interface (VUI) keeps drivers' eyes on the road by enabling hands-free, eyes-free, voice-based access to infotainment — reducing visual-manual demand. Graphical user interface (GUI) complements the VUI with glanceable, context-aware cards and subtle micro-interactions on the dashboard cluster, supporting comprehension without drawing attention from the road.

The interaction follows a simple grammar. Proactive questions help the driver refocus on the road by asking grounded, scenario-optimised questions. Active voice, a public ADAS-scale voice grammar helps. Interaction is delivered through micro-dialogues: if the driver remains unresponsive or distracted, the concept includes a failsafe handover scenario to a safe state.

Interaction flow — detection to response

| Prevention | Detection | Scenario A | Scenario B | |||

|---|---|---|---|---|---|---|

| Activation | Intervention | Activation | Intervention | |||

| Customer actions | Select preferences for routes and entertainment | Drive while system passively monitors attention | Respond to verbal prompts | Answer questions, follow prompts | Not answers questions | Car takes control, driver observes |

| Visible system actions | Display personalized suggestions | Show no visible actions; system operates in the background | Trigger verbal prompts or visual warnings | Engage driver with contextual questions | Display transition notification ADAS; assume control of vehicle | Control steering and pedals, ensure safety |

| Invisible system actions | Analyze user preferences with machine learning | Monitor eye-tracking and heartbeat data using sensors | Process distraction threshold and decide intervention | Process driver responses | Detect lack of response; activate Level 3 automation | Monitor safety parameters during automated control |

| Support processes | Update database of user preferences and profiles | Calibrate sensors for accurate data collection | Maintain AI algorithms for distraction detection | Update VUI prompts | Ensure safety and redundancy protocols for automation | Update safety protocols and automation algorithms |

| Technology used | Machine learning for personalization; GUI for displaying informations | Monochrome infrared-sensitive camera; Infrared light units (700-1000 nm); Heartbeat monitoring sensors | AI-driven analysis of sensor data | VUI leveraging natural language processing | Level 3 automation technology for steering and pedals | Level 3 automation for car control |

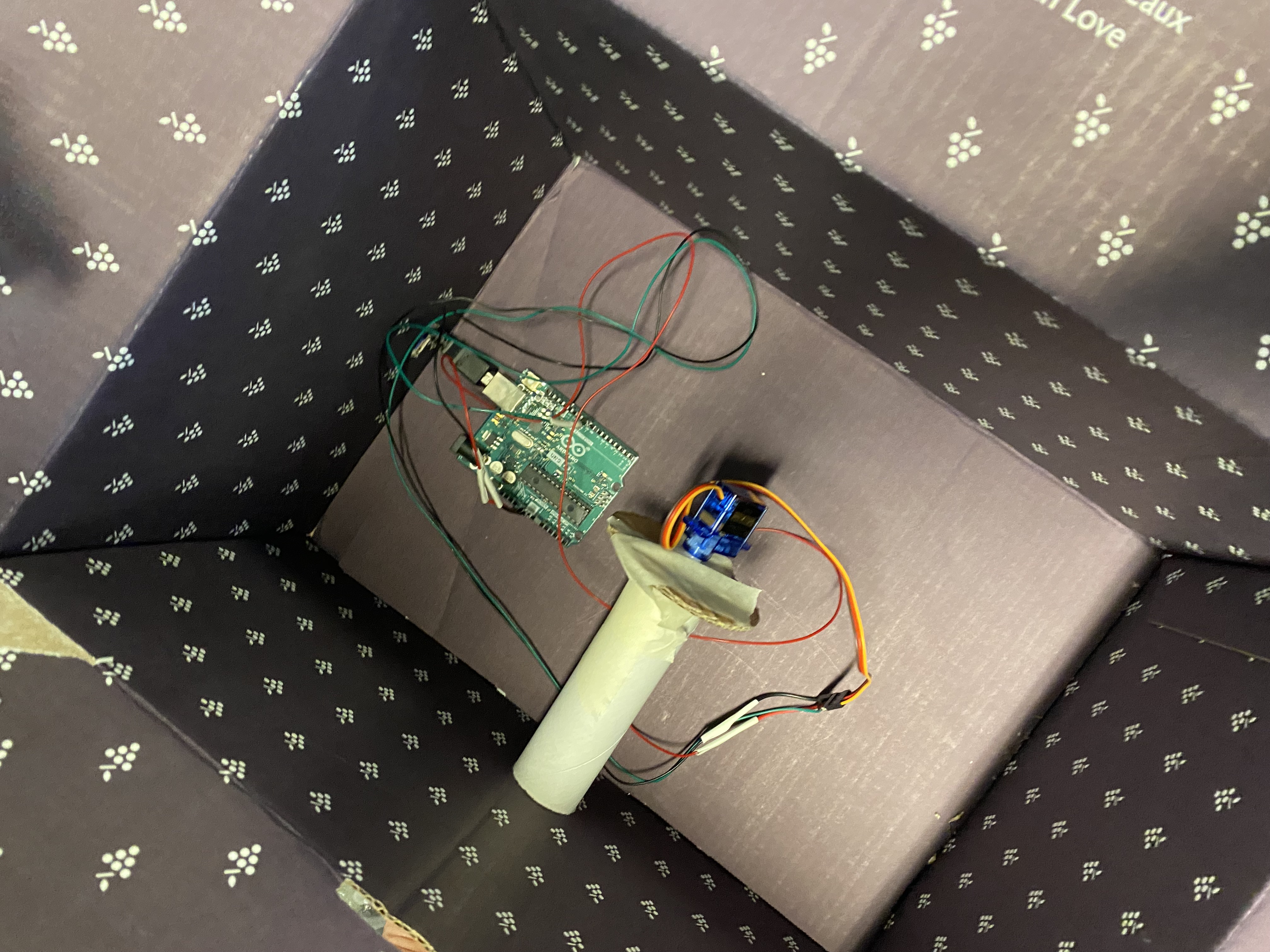

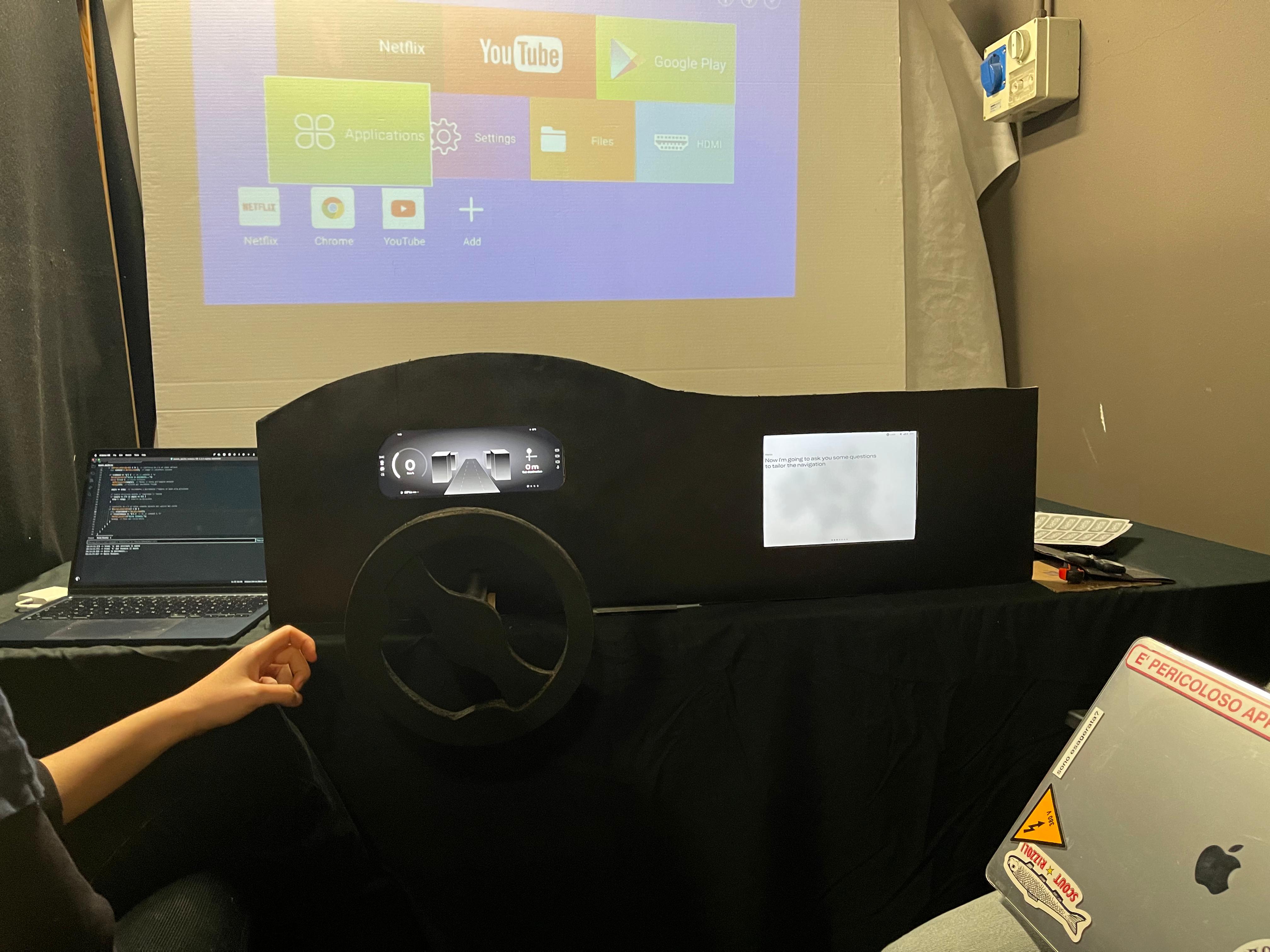

Developed with Italdesign, Alertia moved from desk research through co-creation: focus groups, stakeholder interviews, shadowing and MVP simulations. A low-fidelity cockpit — an Arduino-driven steering input, emulated handover via Wizard-of-Oz test activation patterns, assisted placement — enabled rapid iteration. UX/UI defined card, context-based micro-questions (Guttman's hierarchy), and failsafe agency-preserving handover scenarios.

The loop was consolidated during a showcase, refining the Prevention → Actuation → Intervention chain. All phases and artifacts are detailed in the project report.