Seeing without us

Neural glitch as design research

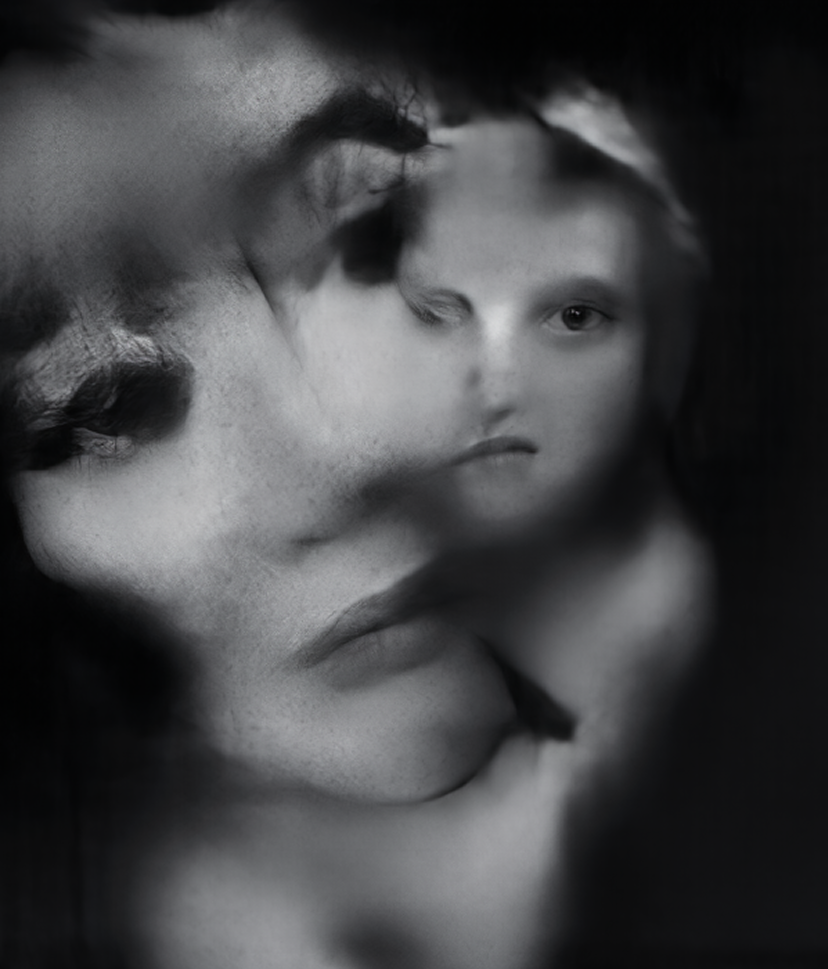

Generative Adversarial Networks create images neither drawn by a human hand nor intended for a human eye. In Neural Glitch, Mario Klingemann disrupts trained models to induce controlled errors, producing abstract compositions that resist habitual perception. The result is a non-human aesthetic, where machine vision becomes both subject and medium—shaped by human bias but not optimized for human recognition.

Meaning arises not from the image itself but from the encounter,

where users read uncertainty much like a perceptual Rorschach.

The value of ambiguity

While most AI-mediated creation optimizes for familiarity and photorealism, the glitch demonstrates the value of ambiguity. Meaning arises not from the image itself but from the encounter, where users read uncertainty much like a perceptual Rorschach. By withholding clarity, these systems preserve interpretive freedom and avoid the narrowing effects of hyper-real outputs.

Implications for interface design

The implications extend to interface design. Low-confidence states can be rendered as soft edges, hinted forms, or gentle noise that slow snap judgments and invite interpretation. Confidence can scale clarity, exposing system reasoning through lightweight cues such as confidence bands, attention highlights, or latent “scrubs.” Error becomes a tool for agency rather than a flaw, enabling reversible prompts, comparative previews, and calibration sliders that let users co-create with the model.

Beyond anthropomorphic metaphors

Moving beyond anthropomorphic metaphors, Neural Glitch argues for abstract cues—texture, rhythm, haptics—that communicate state without simulating humanity. The experiment reframes failure as a design material, suggesting how ambiguity, transparency, and machine-native aesthetics can inform interaction patterns in future AI interfaces.

Credits & Sources

JoGan: Azaky, Art in the Age of Machine Learning (MIT Press, 2020) · Mario Klingemann, Neural Glitch (2019) · Hisanko Mori, The Uncanny Valley · Kleber & Trykszawska (2016) on the alien nature of its findings